|

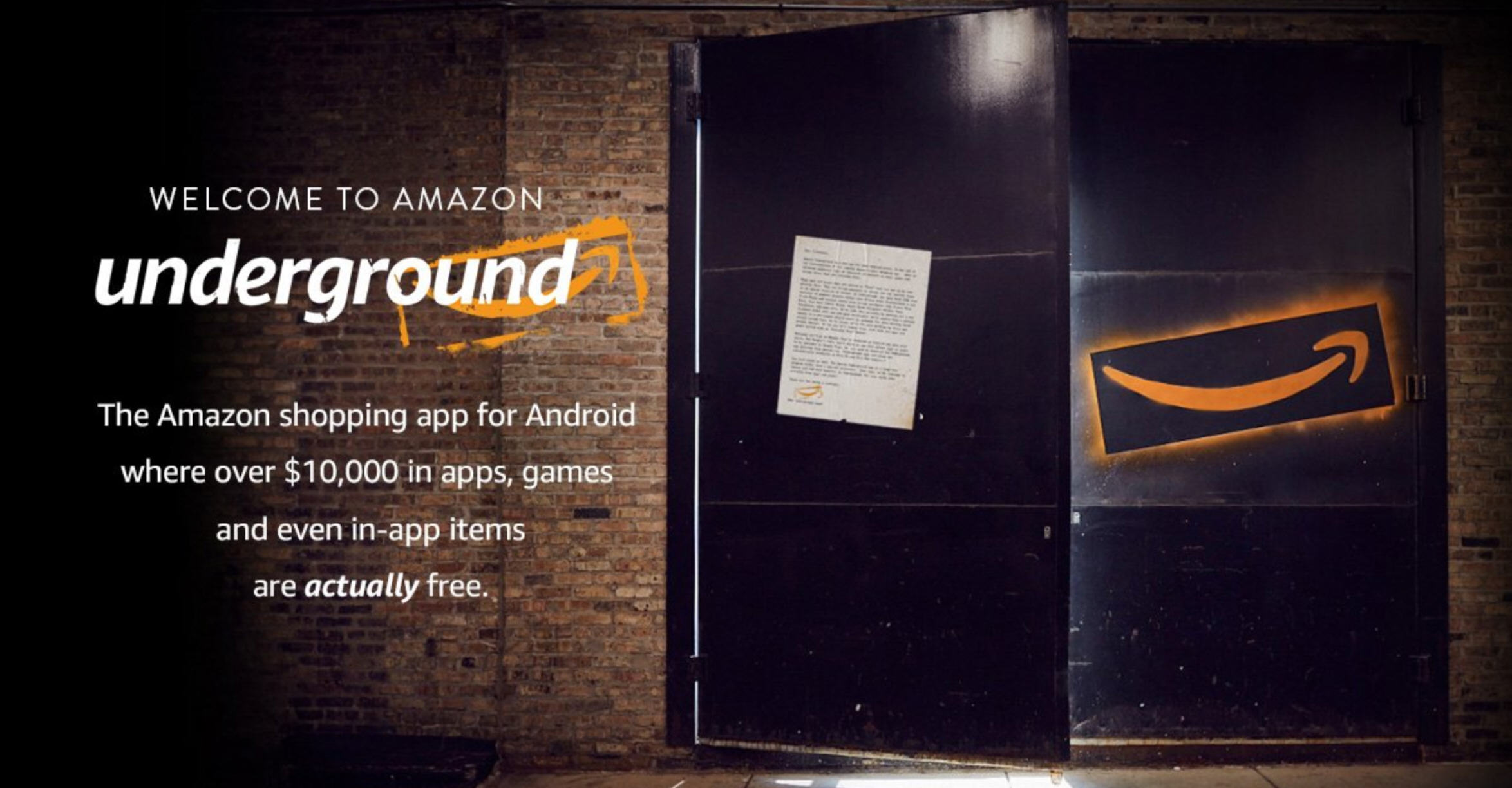

1/30/2024 0 Comments Amazon underground anomaly 2

The apps will be fully free, meaning all of their usual in-app purchases are also free. In addition, when you run the write_dynamic_om_options function, you need to add this option, format_options = ".Amazon is giving away over $10k in apps and in-app purchases as part of a new service entitled Amazon Underground. In this example, only the transaction_id and ridecount columns are mapped. You can do this by using the ApplyMapping.apply() function in AWS Glue. While you pull data from DynamoDB, we recommend that you choose only the necessary columns for model training and write them into Amazon S3 as CSV files.

The AWS Glue job retrieves data from the target DynamoDB table by using create_dynamic_frame_from_options() with a dynamodb connection_type argument. To prepare data for model training, we’ll store our data in DynamoDB. In this example, a DynamoDB table (“taxi_ridership”) in the us-west-2 Region is replicated to another DynamoDB table with same name in us-east-1 Region using the Global Tables of DynamoDB. If you have not set these up yet, you can reference these documents for more information: Capturing Table Activity with DynamoDB Streams, DynamoDB Streams and AWS Lambda Triggers, and Global Tables. I assume that the DynamoDB stream is already enabled and DynamoDB items are being written to the stream. This section explains how AWS Glue reads a DynamoDB table and automatically trains and deploys a model of Amazon SageMaker. The second section, “Detecting anomalies in real time,” shows how the AWS Lambda function processes previous steps 4 and 5 for anomaly detection. All of the sample scripts in this section run in one AWS Glue job. The first section, “Building the auto-updating model,” explains how the previous steps 1, 2, and 3 can be automated using AWS Glue. The Lambda function alerts user applications after anomalies are detected.AWS Lambda function polls data from the DynamoDB stream and invokes the Amazon SageMaker endpoint to get inferences.The same AWS Glue job deploys the updated model on the Amazon SageMaker endpoint for real-time anomaly detection based on Random Cut Forest.AWS Glue job regularly retrieves data from target DynamoDB table and runs a training job using Amazon SageMaker to create or update model artifacts on Amazon S3.Source DynamoDB captures changes and stores them in a DynamoDB stream.The steps that data follows through the architecture are as follows: The following diagram shows the overall architecture of the solution. Amazon SageMaker offers flexible distributed training options that adjust to your specific workflows in a secure and scalable environment. You can make it easy to use the Random Cut Forest built-in Amazon SageMaker algorithm.You can automatically retrain the model with new data on a regular basis with no user intervention.The data in low awareness can be used for training data. In addition, stand-by storage usually has low utilization. For example, if you have been using Amazon DynamoDB Streams for disaster recovery (DR) or other purposes, you can use the data in that stream for anomaly detection.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed